Data Science Interview Questions

Data science represents an evolutionary extension of statistical capabilities, aimed at effectively handling the vast volumes of data generated in contemporary times. It stands as a thriving and sought-after career path for highly skilled professionals, with continuous advancements shaping its trajectory. Aspiring Data Scientists can greatly benefit from preparing for their job interviews by familiarizing themselves with a diverse range of Data science interview questions. These questions encompass various domains such as Methods and Algorithms, Data Analysis, Machine Learning, Statistics, Artificial Intelligence, and more. This comprehensive guide of Data science interview questions serves as a valuable resource, equipping candidates with the knowledge and insights needed to confidently navigate their job interviews and secure success in the dynamic field of Data science.

Ready to dive in? Then let's get started!

What is Data science?

Data science is a multidisciplinary field that employs scientific methods, advanced algorithms, and sophisticated techniques to extract patterns and derive meaningful insights from raw data. It encompasses a diverse range of approaches, such as statistical analysis, machine learning, and data mining, to unlock valuable information that can drive informed decision-making and create actionable knowledge.

Does very less data lead to best model?

No, it leads to underfitting .

Underfitting occurs when a data model fails to adequately capture the complex relationship between the input and output variables, resulting in poor performance and inaccurate predictions. This situation often arises when there is an insufficient amount of data available to build a robust and accurate model. For instance, when dealing with a vast dataset consisting of billions of data points, the model can better discern outliers and understand the underlying data distribution, leading to more reliable predictions. However, if the dataset is extremely limited, such as having only 10 data points, the model may struggle to comprehend the underlying patterns and generalize to new data effectively, resulting in underfitting. In such cases, the model lacks the complexity to represent the true complexities of the data, leading to suboptimal performance. To combat underfitting, Data Scientists must consider strategies such as gathering more data, employing more sophisticated models, or refining feature engineering techniques to ensure the model adequately captures the underlying relationships within the data.

What is Pattern Recognition?

Pattern recognition is a scientific discipline that enables the classification of objects into various categories or classes, thereby facilitating analysis and enhancement of specific aspects. It encompasses the capacity to identify and discern regularities or patterns in diverse forms of data, ranging from text and images to sounds and other definable attributes. This methodology heavily relies on the utilization of Machine Learning algorithms, which play a crucial role in identifying and extracting the underlying regularities and patterns from the given data. Through pattern recognition, Data Scientists can gain valuable insights, make data-driven decisions, and develop innovative solutions in various domains such as image recognition, natural language processing, and speech recognition, among others.

For ex. k-means Machine Learning algorithm which is a clustering algorithm. When the k-means algorithm runs it finds patterns in your data and try to splits into distinct clusters.

Pattern recognition is a highly versatile and indispensable discipline with a wide array of applications, encompassing diverse fields such as image processing, speech recognition, aerial photo interpretation, optical character recognition, and even medical imaging and diagnosis. In image processing, it aids in identifying and analyzing objects and features within images, facilitating tasks like object detection and facial recognition. Moreover, in speech recognition, pattern recognition algorithms efficiently transcribe spoken words to text or interpret spoken commands, enhancing human-computer interactions.

Additionally, pattern recognition plays a crucial role in aerial photo interpretation, where it enables the identification and analysis of various features in aerial images, such as land use, vegetation, and infrastructure. Furthermore, it significantly contributes to optical character recognition (OCR), automating the conversion of scanned text or handwritten characters into digital formats for seamless data processing. Notably, in the medical domain, pattern recognition empowers advanced medical imaging and diagnosis, aiding in the detection and classification of anomalies in medical images like X-rays, MRIs, and CT scans, thereby bolstering healthcare practices and diagnosis accuracy. The diverse applications of pattern recognition underscore its immense impact across industries, revolutionizing automation, data analysis, and decision-making processes in modern society.

What are the major steps in exploratory data analysis?

Exploratory data analysis (EDA) serves as a powerful technique for data exploration and understanding, offering valuable insights into the dataset's characteristics and patterns. By using EDA, data analysts can readily identify common errors and inconsistencies, ensuring data quality and integrity. Furthermore, EDA facilitates the detection of outliers or anomalous events, which may indicate potential data issues or unique occurrences within the dataset. Additionally, through thorough exploration, EDA unveils significant relationships and correlations among variables, shedding light on underlying connections that may influence the data's behavior. In essence, EDA plays a crucial role in laying the foundation for further data modeling and analysis, enabling data scientists and researchers to make informed decisions and draw meaningful conclusions from the dataset. The major steps to be covered are below:

- Handle Missing value

- Removing duplicates

- Outlier Treatment

- Normalizing and Scaling Numerical Variables

- Encoding Categorical variables( Dummy Variables)

- Bivariate Analysis

What is Genetic Programming?

Genetic Programming is a specialized application of Genetic Algorithms, a subset of machine learning techniques. It emulates natural evolutionary processes to solve complex problems. In Genetic Programming, the goal is to evolve a solution to a problem by iteratively evaluating the fitness of each potential candidate within a population across multiple generations. During each generation, new candidate solutions are created by introducing random changes through mutation or swapping parts of existing candidates through crossover.

The least fit candidates are progressively eliminated from the population, allowing the fittest candidates to propagate and refine their traits, eventually converging towards a more optimal solution to the problem at hand. Genetic Programming finds utility in a diverse range of problem-solving tasks and optimization challenges, capitalizing on its ability to simulate nature's evolutionary principles to drive efficient and effective problem-solving processes.

Difference Between Classification and Regression?

The primary distinction between classification and regression lies in their predictive outcomes. In classification tasks, the objective is to predict or categorize data into discrete labels or classes, exemplified by binary outcomes like True or False, distinguishing spam from non-spam, or multi-class classifications like different types of animals or colors. Conversely, regression tasks involve forecasting continuous numerical values, encompassing a wide spectrum of real numbers, such as predicting prices, salaries, ages, or any other continuous variables. In summary, classification pertains to discrete label assignments, whereas regression revolves around predicting continuous numeric quantities, making them distinct and appropriate techniques for addressing different types of machine learning challenges.

The evaluation of a regression algorithm is often accomplished by computing the Root Mean Squared Error (RMSE) of its output. RMSE serves as a crucial metric to gauge the performance of the regression model, as it quantifies the average discrepancy between the predicted continuous values and the actual target values in the dataset. Lower RMSE values signify a more precise and reliable regression model.

Conversely, when assessing the efficacy of a classification algorithm, the primary evaluation metric employed is accuracy. Accuracy measures the proficiency with which the classification algorithm correctly categorizes input data points into their respective classes or labels. A higher accuracy percentage indicates a more proficient and accurate classification model.

How to use labelled and unlabelled?

Labelled data is data that includes meaningful tags or classes assigned to each observation, often obtained through observations, expert input, or manual labeling. These labels provide valuable information about the nature of each data point, making it suitable for supervised learning tasks. In contrast, unlabelled data lacks such informative tags or classes, and there is no specific explanation associated with each data point.

Supervised learning involves using labelled datasets to train algorithms for tasks such as classification and regression. In classification, the algorithm learns to categorize data points into predefined classes, while regression aims to predict continuous numeric values. The presence of meaningful labels in the labelled dataset enables the algorithm to learn and make accurate predictions based on the provided information.

On the other hand, unsupervised learning deals with unlabelled datasets. In this approach, algorithms seek to identify patterns, relationships, or groupings within the data without the need for pre-defined labels. Unsupervised learning methods, like clustering and dimensionality reduction, are valuable for uncovering insights from unstructured or unlabelled data, aiding in data exploration and pattern discovery.

How to deal with unbalanced data?

Imbalanced data refers to a common issue in machine learning where the distribution of target classes within a dataset is skewed or unequal. In other words, one or more classes in the target variable have a significantly larger number of instances compared to other classes. This imbalance in class distribution can lead to biased model training and affect the performance of machine learning algorithms. There are several techniques to handle the imbalance in a dataset:

- Change the performance metric

- Resampling your dataset

- Oversampling minority class

- Undersampling majority class

- Clustering the abundant class

- Ensembling different resampled datasets

- Implement different algorithms

- Generate synthetic samples

If you have a smaller dataset, how would you handle?

There are several ways to handle this problem. Following are a few techniques.

- Choose simple models

- Remove outliers from data

- Combine several models

- Rely on confidence intervals

- Apply transfer learning when possible

- Find the ways to extend the dataset

What is Cluster Sampling?

Cluster sampling is a well-established sampling technique used in research and data collection, especially when the target population is large or geographically dispersed. In cluster sampling, the entire population is divided into multiple clusters or groups based on certain logistical considerations, such as geographic locations or administrative divisions.

To conduct the sampling, researchers randomly select clusters from the entire population using either a simple random sampling or systematic random sampling technique. Once the clusters are chosen, data collection and analysis are performed within the selected clusters. For instance, in a study involving households, geographic areas may be chosen as clusters in the first stage, and then households are selected from each chosen area in the subsequent stage.

Cluster sampling is particularly useful when it is impractical or costly to collect data from each individual in the population. By selecting clusters and sampling within those clusters, researchers can efficiently obtain representative data from a diverse range of locations or groups.

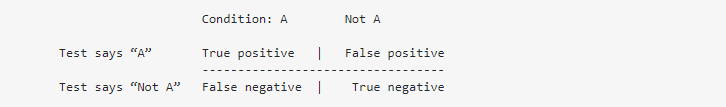

Define False Positive and False Negative

- False Positive - A case which is False, but gets classified as True.

- False Negative - A case which is True, but gets classified as False.

For ex. In a classification / screening test, you can have four different situations:

Continue.....Data Science Interview Questions (Part 2)